Transparent objects seem ordinary in daily life, but for robots, they are some of the most difficult targets to see and handle. Clear bottles, plastic containers, medical vials, lamp covers, and translucent packaging can all behave unpredictably under light. Instead of producing clean, stable visual signals, they often create weak reflections, distorted contours, or confusing glare.

That is where the real challenge begins. In industrial automation, a robot must do more than simply “see” an object. It needs to detect it accurately, understand its shape and pose, and then plan a safe, reliable grasp. When an object is transparent, each of those steps becomes harder.

This is why transparent object handling has long been considered a classic problem in machine vision. It is not just a matter of better lighting or a sharper camera. It is a perception problem that sits at the intersection of optics, imaging, and intelligent robot control.

Why Traditional Vision Systems Struggle

Most conventional vision systems are designed around visible surface features: edges, texture, contrast, and consistent light reflection. Transparent objects do not offer these features in a stable way.

The first issue is refraction. When light passes through a transparent material, it bends. That bending changes the way the object appears to the camera, often making its outline look incomplete or inaccurate. A bottle or container may look shifted, stretched, or partially invisible depending on the viewing angle.

Refraction leads to apparent shape distortion in transparent objects.

The second issue is low contrast. Transparent materials often blend into the background, especially when the surrounding scene is similarly colored or highly reflective. Without clear contrast, it becomes difficult for algorithms to separate object from environment.

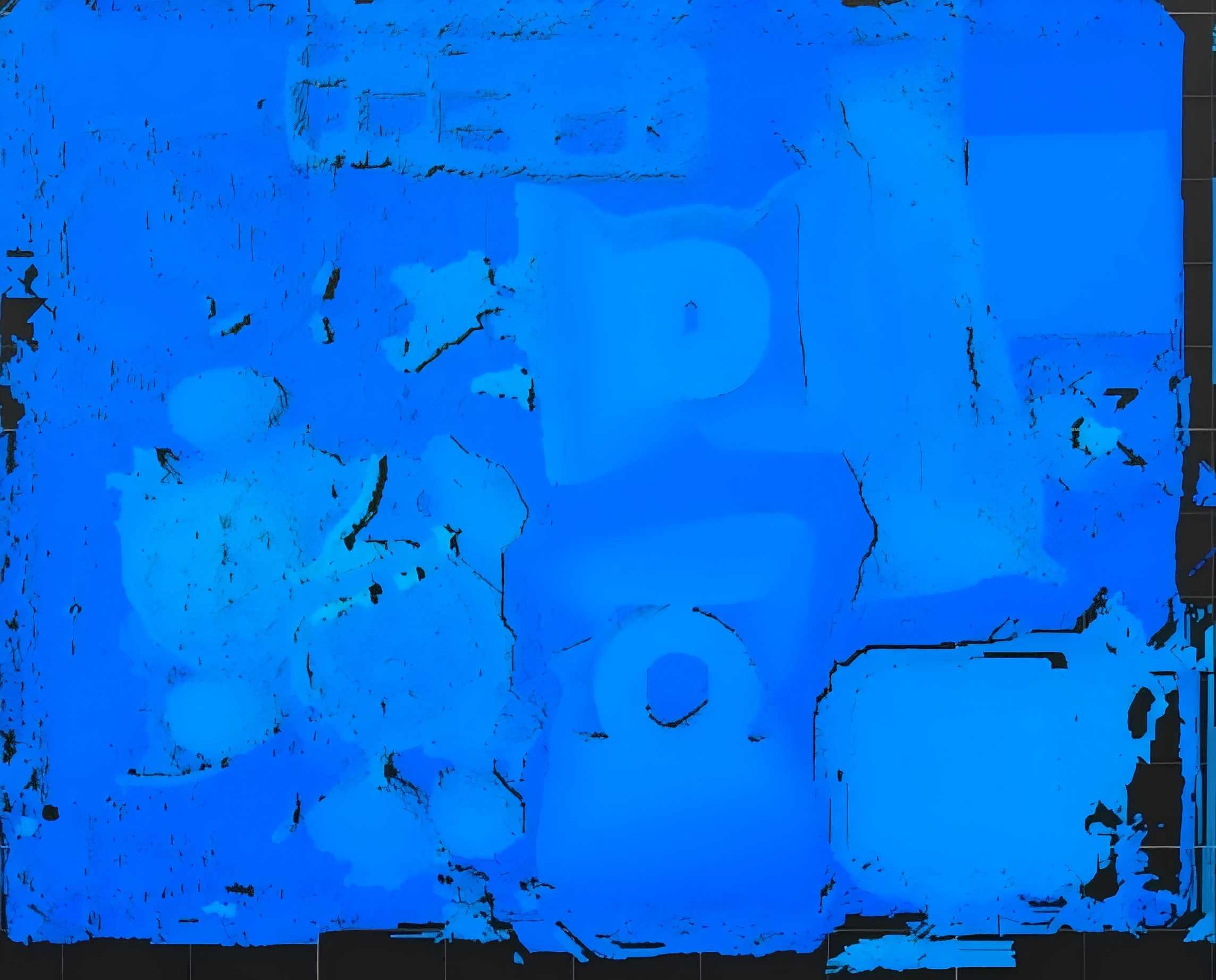

Low contrast reduces object-background separation and blurs point cloud results.

The third issue is interreflection. Light may bounce between the object surface and surrounding surfaces, creating ghosting, glare, or false edges. These artifacts can mislead a vision system into detecting the wrong shape or location.

Interreflection refers to the multiple reflections of light between the surfaces of an object caused by its complex shape.

For humans, these effects are often obvious once we know what to look for. For robots, they can completely undermine recognition and picking reliability.

Why the Problem Is Harder Than It Looks

Transparent object handling is difficult not only because the object is hard to detect, but because the whole downstream workflow becomes unstable.

If the robot cannot estimate the object’s pose with confidence, grasping accuracy drops. If the point cloud is incomplete, the robot may misjudge the object boundary. If the visual signal changes from frame to frame, picking behavior becomes inconsistent. And in high-speed automation, inconsistency quickly turns into downtime, rework, or missed cycle times.

This is why the problem cannot be solved by perception alone. The imaging system must produce data that is stable enough for the robot to act on. In other words, transparent object handling is a full-stack challenge: optics, 3D imaging, algorithmic enhancement, and motion execution all have to work together.

What a Better Imaging Approach Looks Like

A more effective approach to transparent object handling starts with better visual input.

Instead of relying only on surface appearance, advanced 3D vision systems use structured imaging, depth information, and optimized optical design to capture more useful geometric data. That gives the robot a better chance of understanding the actual spatial form of the object, even when the surface itself is visually deceptive.

In transparent scenarios, the imaging system also needs to be robust against weak reflections and interreflection interference. That means the system must do more than “see through” the object. It must also suppress misleading optical noise and preserve enough structure in the image or point cloud for downstream recognition and grasp planning.

This is where specialized industrial 3D vision becomes especially important. High-quality imaging is not only about resolution. It is about how much reliable information the system can extract from difficult materials under real factory conditions.

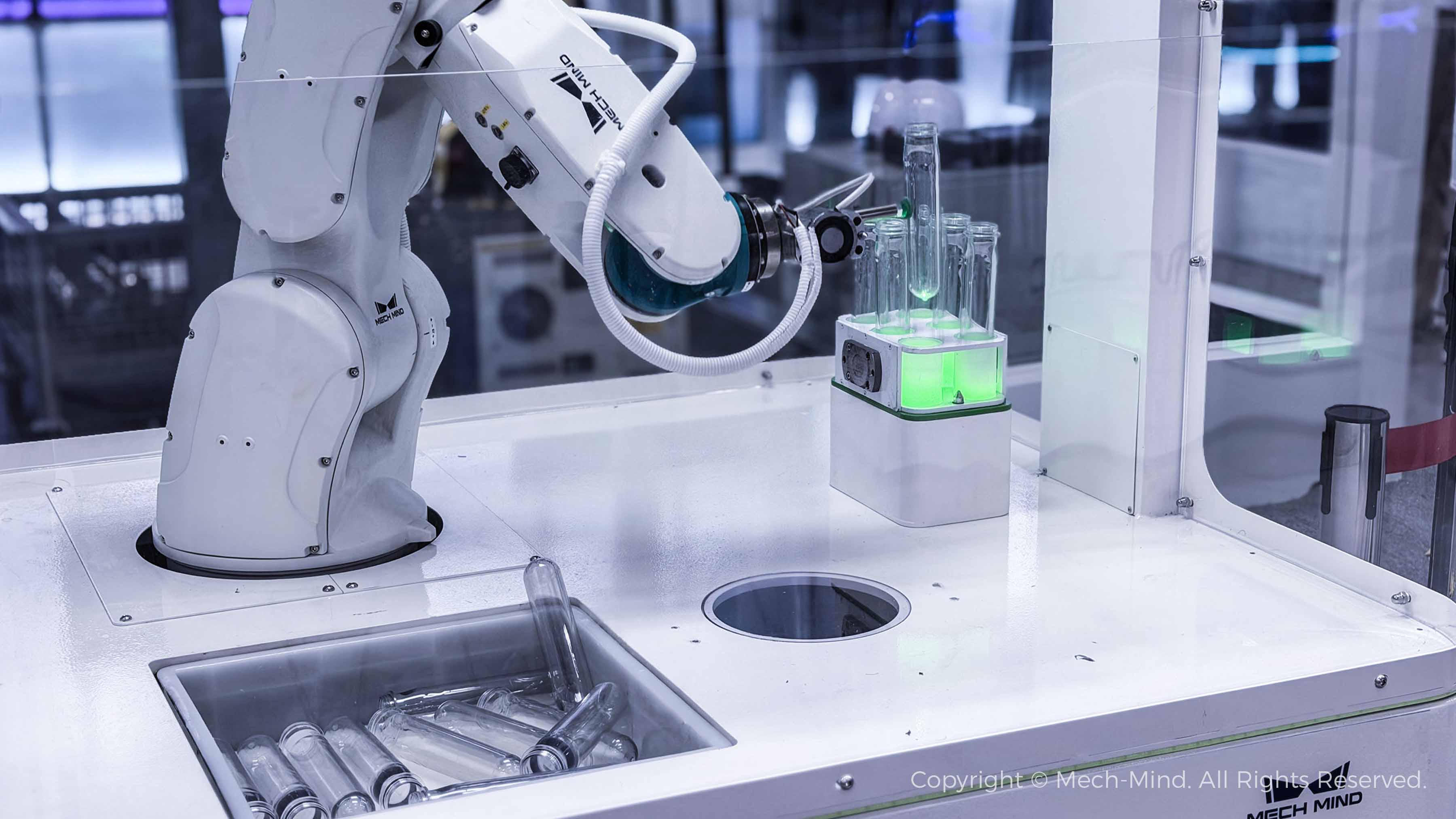

Mech-Mind "Eye + Brain" for Robots: High-Precision Picking of Transparent Tubes

(Click to watch the on-site application video)

Mech-Mind’s Technical Advantage in Transparent Imaging

Mech-Mind focuses on solving these difficult perception problems with advanced 3D vision and imaging algorithms designed for industrial use. In transparent object scenarios, the key advantage lies in producing more stable, higher-quality visual input for the robot.

By combining optimized optical design with dedicated transparent imaging algorithms, Mech-Mind helps improve image clarity, suppress interference from weak or multiple reflections, and deliver more complete point clouds. That makes transparent and translucent objects easier for robots to detect, localize, and grasp.

Just as important, this is not limited to transparent objects alone. The same imaging foundation also supports other challenging applications such as thin-walled parts, reflective surfaces, and fast-moving objects in complex environments. For automation teams, that means one perception platform can cover multiple difficult scenarios instead of solving each one separately.

Mech-Mind "Eye + Brain" for Robots: Generalized Picking of Transparent Objects

(Click to watch the on-site application video)

Why This Matters in Real Automation

The value of better transparent imaging is easy to understand when you look at the production floor.

In pharmaceutical and medical applications, robots may need to handle transparent vials, bottles, and packaging materials with high precision.

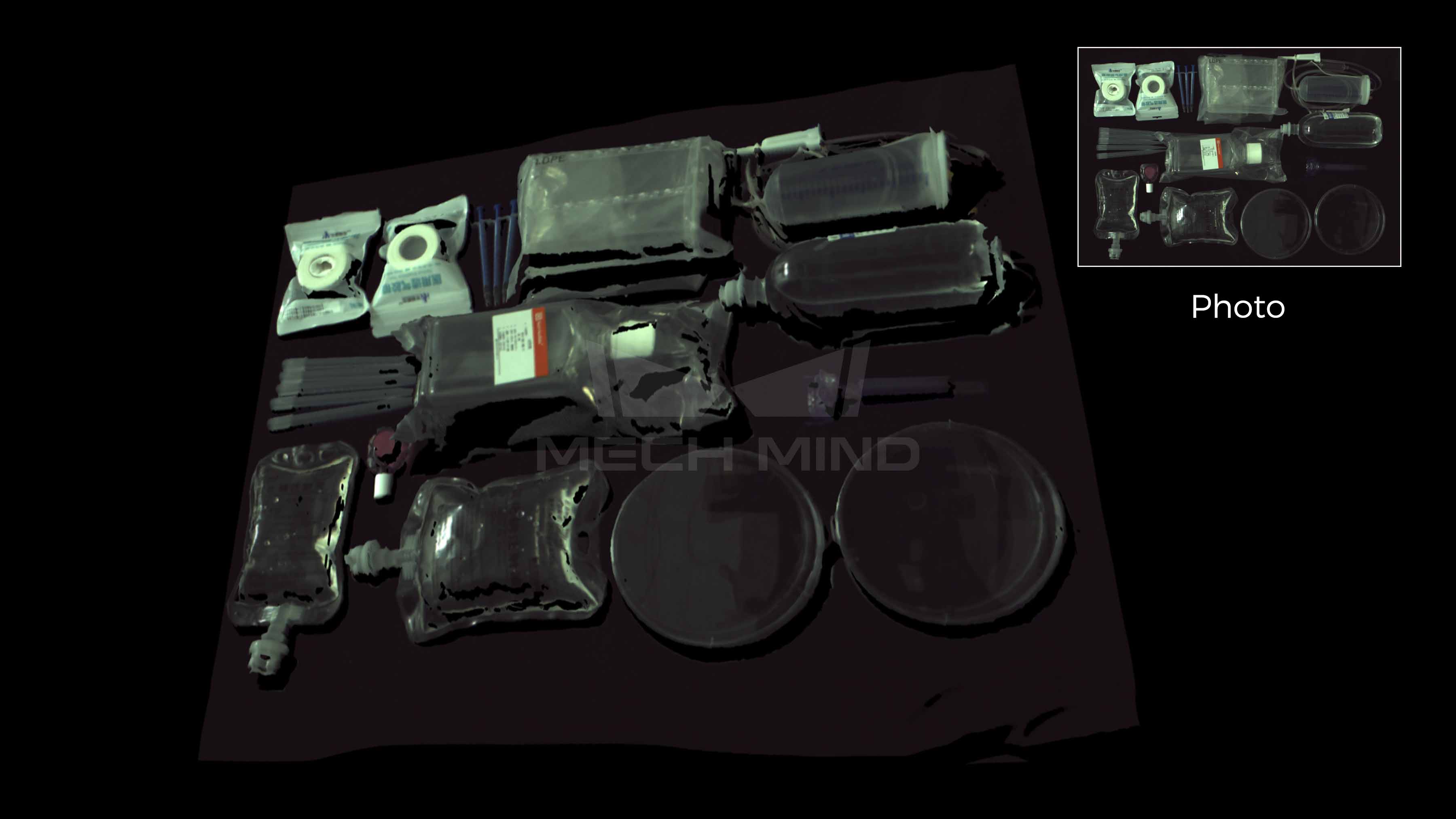

Point clouds of transparent/translucent medical supplies

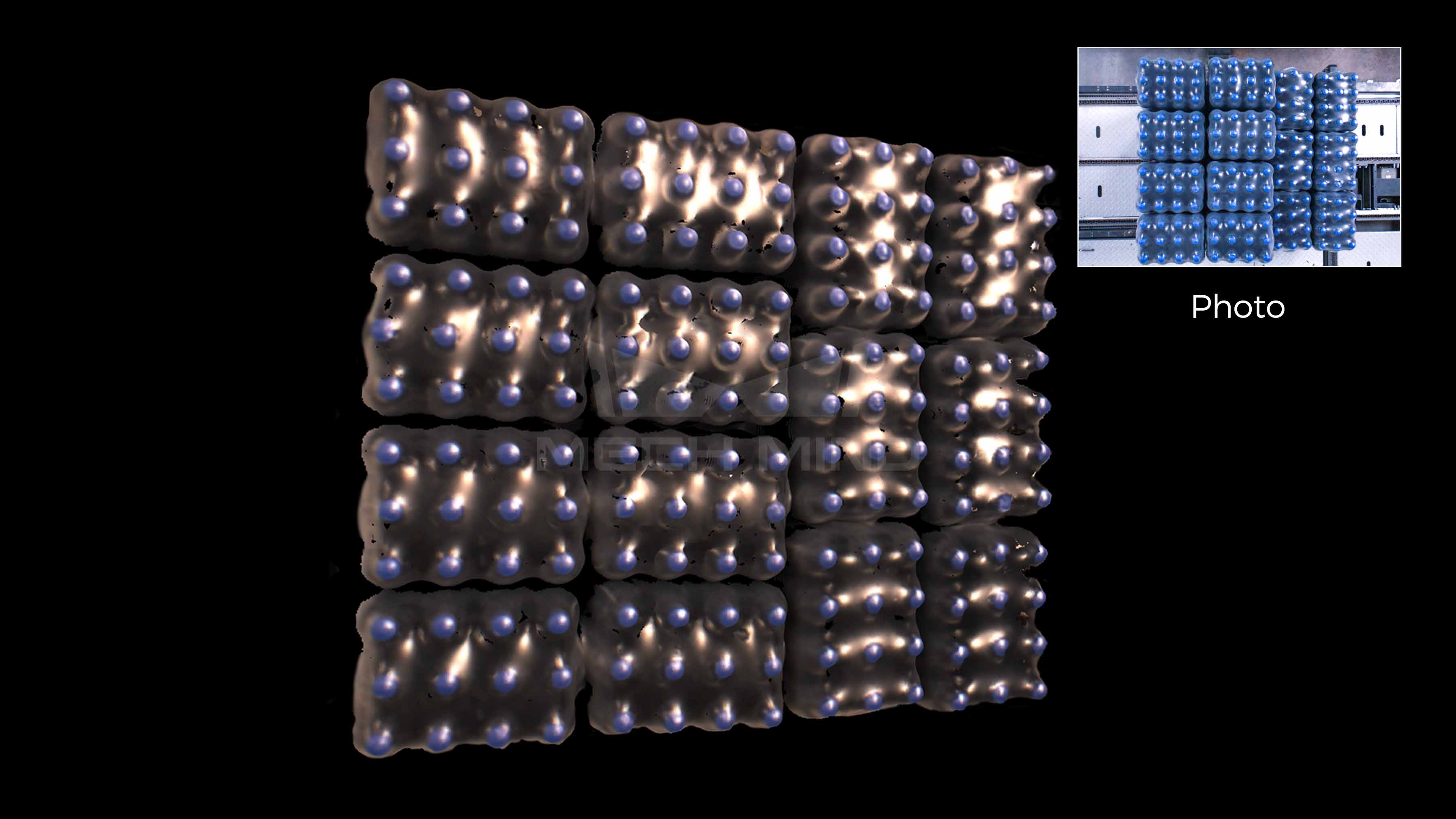

In retail and logistics, transparent containers and plastic packaging are common in sorting and picking workflows.

Point clouds of transparent/translucent packaged retail goods

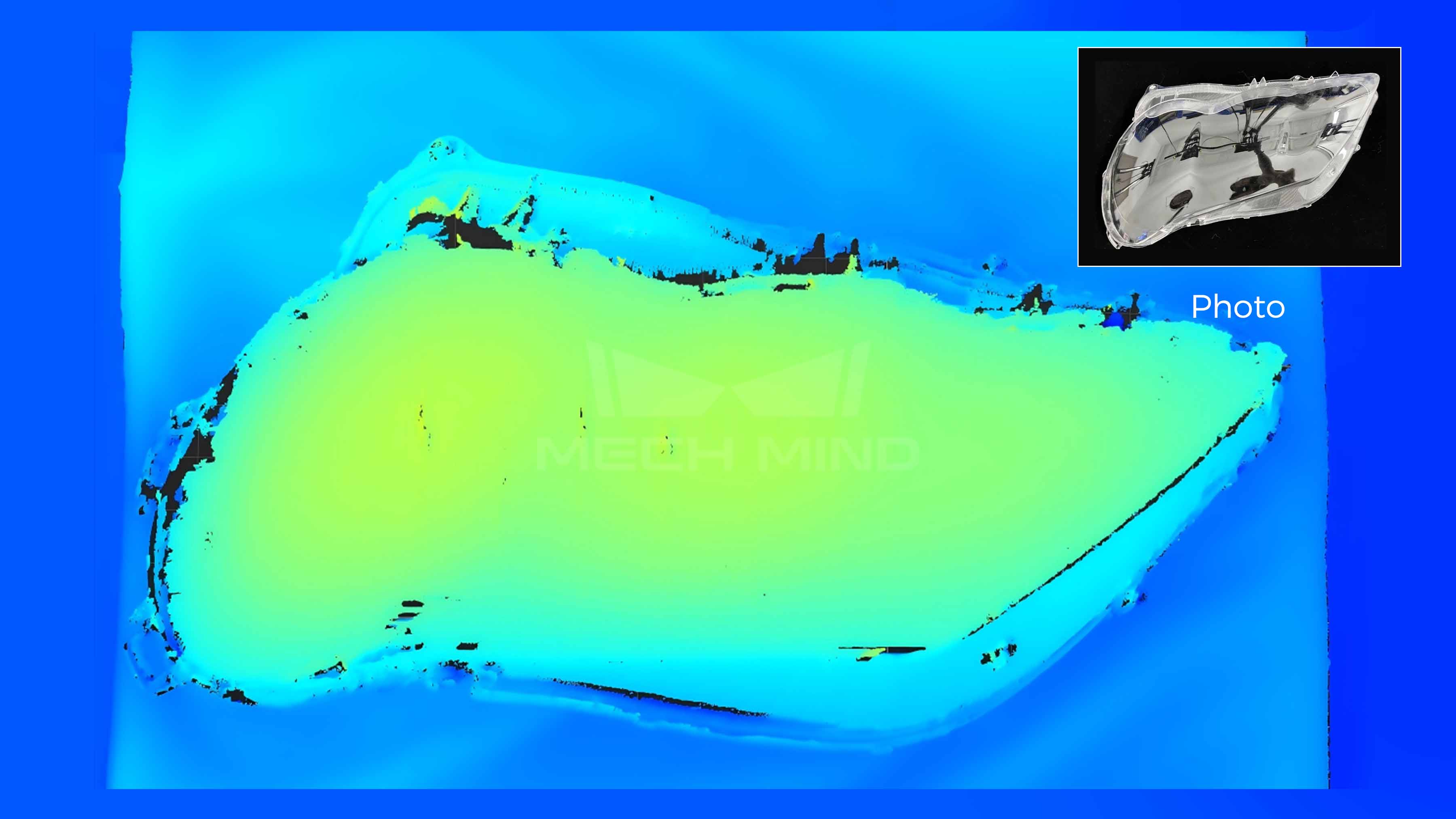

In automotive and manufacturing, transparent or translucent parts such as lamp covers and thin-walled components can be hard to image reliably.

Point clouds of transparent automotive lamp cover

In the food and beverage industry, mixed packaging materials and variable lighting often make vision even more difficult.

Point clouds of uneven, transparent shrink-wrapped bottles

In all of these cases, the core need is the same: the robot must receive clean enough visual data to make stable decisions. When transparent imaging improves, picking reliability improves. And when picking reliability improves, automation becomes easier to deploy and scale.

From a Vision Challenge to an Automation Opportunity

Transparent objects expose one of the most fundamental truths in robotics: a robot is only as good as what it can perceive.

That is why transparent object handling remains such an important benchmark for industrial vision systems. It forces the system to deal with optical complexity, environmental variability, and real-time robot execution all at once. Solving it requires more than incremental improvement. It requires a perception strategy built for the realities of industrial automation.

With advanced 3D vision and transparent imaging algorithms, Mech-Mind is helping robots move beyond surface-level perception and toward more reliable understanding of the world around them. Building on this improved perception foundation, the system offers strong generalization capability, adapting to different transparent objects and production scenarios without extensive reconfiguration. That is not just a technical improvement. It is a practical step toward smarter, more capable, more generalizable, scalable automation.

Transparent objects may be difficult to see. But with the right imaging technology, they do not have to be difficult to handle.